As can be seen in some previous posts, I have a strange preference for old hardware. See my Shameless Call for Hardware and The Vintage Hardware Stack. Especially old Storage Devices. The feeling of happiness and sheer joy when an old monster wakes up, boots, and everything goes green, is very nice. Allthough much of the equipment is no longer capable of delivering much-needed performance nowadays, it still has a lot of the functions that are used in (be it monolithic) setups currently being used. A hobby like this has its disadvantages though. A high powerbill, true. Transportation? Not always easy. Many lost hours trying to find that one missing cable, also true. But the stuff that I trade for cake is not technically end of life (I mean, you can still learn a lot even if it’s just 1GbE iSCSI or 4Gb FC) and most of the parts do still function. Except for one part, and that’s the Waterloo of a lot of systems: Batteries. Be it a cache battery in a HP EVA4100 or 4400, on a BBWC HBA or the Standby Power Supply (SPS) of an EMC Clarion CX3-10. Let’s have a rundown of some systems that I have right now..

EVA4100 / 4400 / 6100

Imagine you’ve just received a HP EVA4400. It has been lying around at $Customer for some time, and finally they decided to get rid of it. Three shelves with 1TB, 300GB and 450GB disks and a dual controller 4GB FC system. about 20TB raw capacity and a lot of spindles, this should be a fairly decent LAB system. So far so good. Cabling has been done properly, power connected, here we go. All blinkinlights and disks green, superb. Only two amber lights on two modules at the front side of the HSV200 controller chassis. No beeps. When finally reset the password of the unit and entered CVE, all seems good. Nowhere a orange exclamation mark in CVE. When presenting LUNs to a server, they don’t show up in disk management of the initiator. It seems like LUNs do not get presented at all. Hm, that’s odd. Let’s dig deeper into Storage system – Hardware – Controller Enclosure. Ah! Cache battery operational state: 1 & 2 failed. Well, that’s a pity. HP decided that LUN presenting should be disabled when cache batteries were dead, so to protect data integrity. In case of a power failure, data could not be kept in volatile cache and would be lost. Not a bad decision, don’t get me wrong. But for the sake of hobby I would love to have an override. But, there is not. Batteries dead = no LUNs presented. With no budget, it seems like end of story, new sealed batteries are >$250,- on eBay, for example. Oh, and I’ve burried an EVA4100 and EVA6100 the same way, all with dead cache batteries.

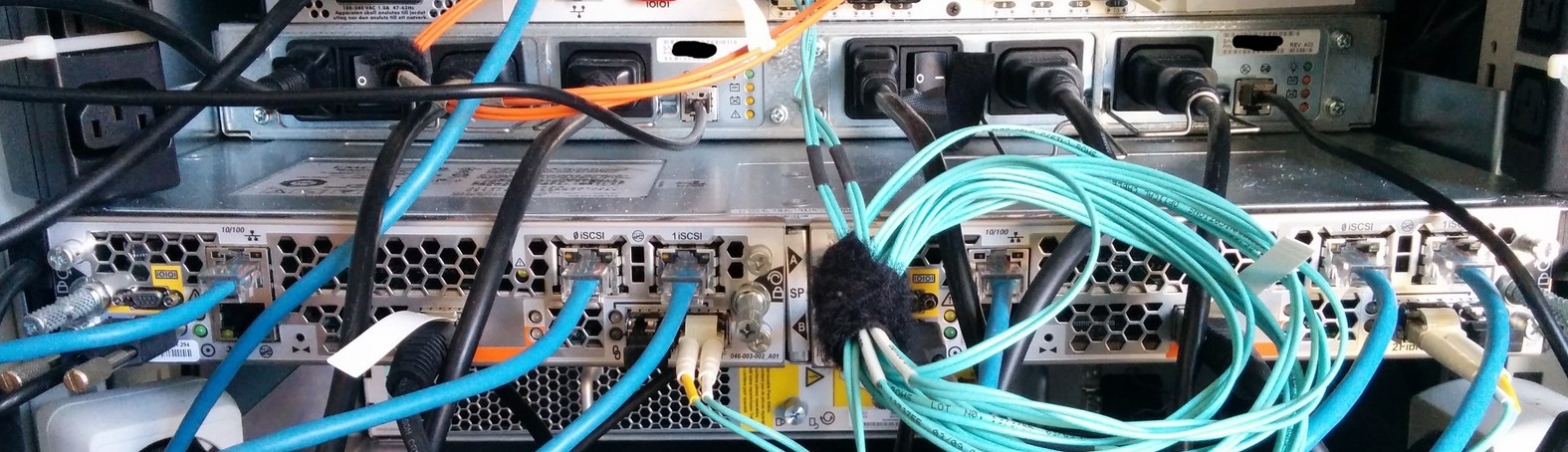

EMC CX3-10c

Nice, another donation. Let’s get my hands dirty on NaviSphere (hurray) with this EMC CX3-10c. The smallest version of the CX3’s, but still nice to practice with.

It has 2 iSCSI ports and 2 FC ports per Service Processor, so moeltiprotocol Storage! It comes with the SP’s in a separate chassis, and Disk Array Enclosures with 16x 300GB FC disks connected through 4gbps FC backend ports. Hey, what’s that heavy 1U unit? Ah, the Standby Power Supply! EMC built a mini UPS for the CX series. Two SPS’s in a 1U chassis, providing power to both the SP’s and the DAE. Status of the SPS’s is transported to the SP’s with a RJ45 to Micro (!) DB9 Sense Cable, one per SP/SPS combo. But, after cabling the lot and booting, the lower amber light of both the SPS’s is on. That means a not-present, or dead, battery. Luckily the unit still works, but Write Back cache is disabled. So. Things. Are. Even. Slower. Than. Normal. Darnit.

The Micro DB9 connectors. One for creating a PPP (!) over serial connection to reset the domain information and security credentials, and one for communicating with the SPS

EMC AX150i

I have to boot this Beauty (Arrrr!) up again some time, I like it. Only I don’t know why it is still blinking orange. it works, it serves, and all things are enabled. Let me get back on that one.

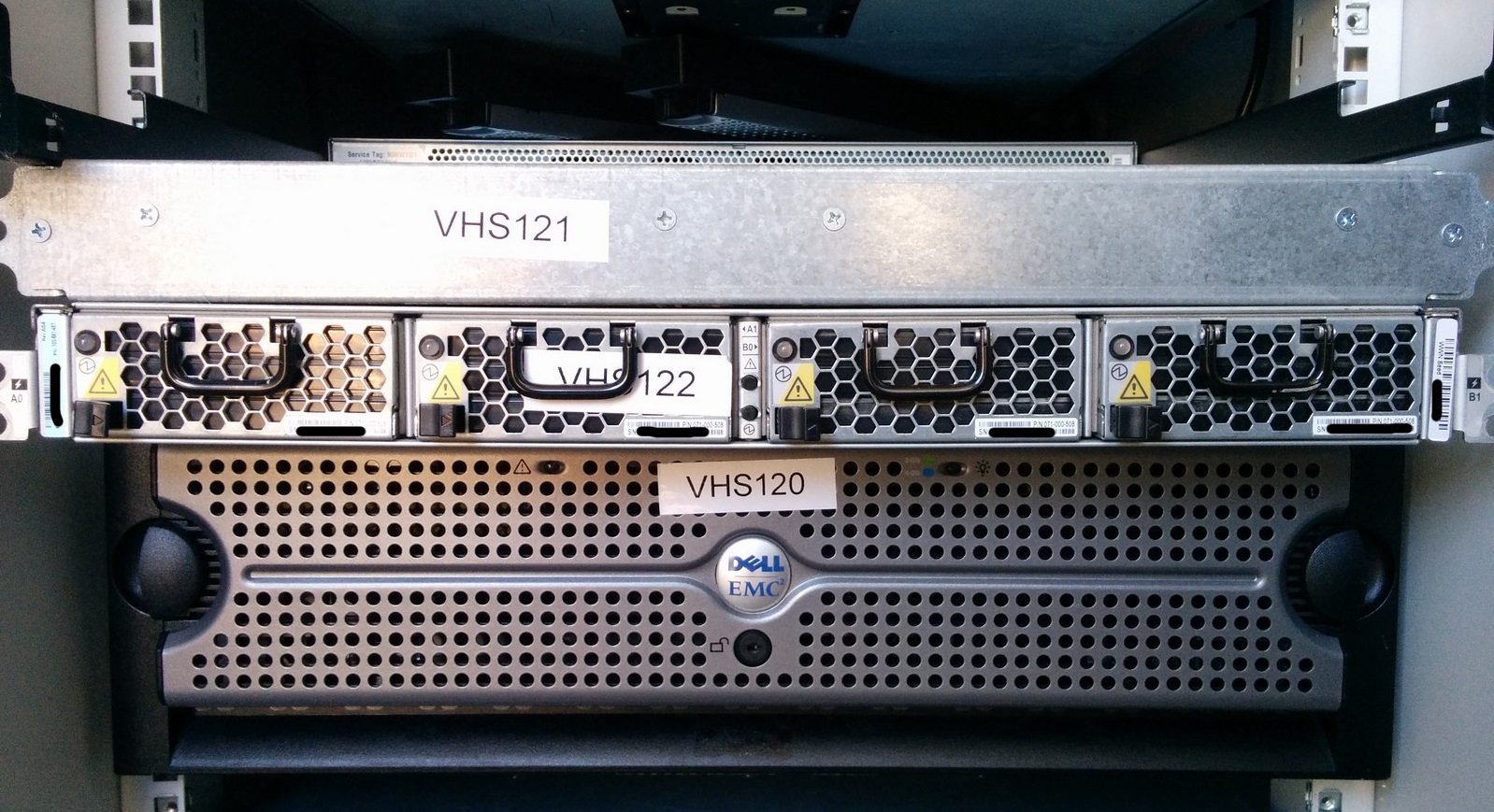

DELL EQUALLOGIC

Ah, now that’s a very straightforward and feature rich iSCSI unit. Without licensing hassle you get the Lot, including replication, very nasty snapshotting and often ineffective load balancing when put in a group. But, when you don’t use those things, they’re quite OK. There are four EqualLogics in the Stack. The two PS6000X’s are 400 and 450GB, and together with a PS6000E the strongest in the collection. That PS6000E with 8 x 250GB SATA disks has a rough edge though. After transportation from one office to the other, it started off with a missing dimm0, broken RAID LUN 0, 9 failed disks and reluctant to be discovered with the Remote Setup Wizard. But, got that al fixed. So there still is hope. And, we have a PS4000E with 8 x 250GB SATA disks and a single controller for beta testing. That one gets a beating once in a while, but still operates nice and sweet. Perfect boxes to quickly set up a small virtualization environment, for example.

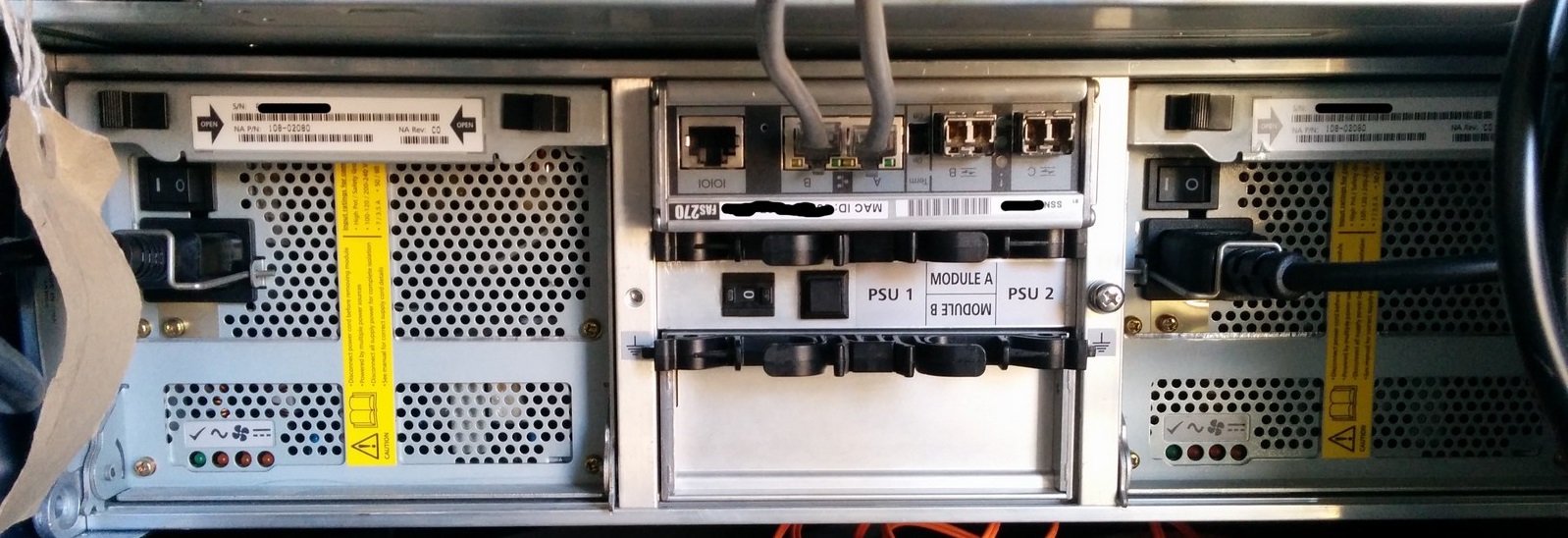

NETAPP FAS270

One of my favorites in the Stack is my old trusty NetApp FAS270.

The controller is shoved in the back of a DS14 Mk1 shelf with 14×144 1gbit FC disks, which causes nice beeps when booted and errors in ONtap, because of environmental sensors that are missing. It expects a DS14 Mk2 or a FAS270-labeled shelf. But it works! Two 1gbit ports for iSCSI connectivity is all, but hey .. There are some FC ports as well. I’m betting that the FC “C” port can connect a shelf? It nags that the cache battery is low only for <20 minutes, and after that it is nicely charged and all functions are enabled. Now that’s quality 🙂 Only it does not have any performance.

NETAPP FAS940

One of the biggest heads I’ve ever had. A 6U monster weighing in at 48kgs. But, with the right I/O cards, full multiprotocol (for that time) with NFS, iSCSI and FC. Oh, and add CIFS and HTTP as well. PCI-X I/O expansion, 256MB NVRAM card and 3GB ECC system memory. And that’s only one, when running clustered you needed two of these molochs. I have had this one running with a number of DS14 Mk1 shelves with 14x72GB FC disks, in two separate dual loops. FC Target HBA, three 2-port gigabit NICs and a remote management card. Basically it’s just an Intel machine with XEON 2.8gHz on a NetApp designed PCB. It occasionally nagged about controller battery as well, but as with the FAS270 it got cleared half an hour later.

This is the setup that ran on HAR2009. Flawlessly, might I add.

NETAPP FAS2020

The “modern” version of the FAS270, with a controller in-the-shelf. Better (or less worse) performing with SAS disks, eight 250GB disks to be precise. This one is sadly enough equipped with only one controller, so no cluster. But the controller gets charged also within a small amount of time. Does anyone have a second controller? Also FC and iSCSI front-end ports, and all the protocols as the FAS940. All in a nice 2U package.

HP MSA2000i

The HP MSA2000i in the Stack has never been fully tested, also due to the second controller failing fairly quickly. But, the iSCSI setup of this unit is quite something. Sadly one controller has failed, and I am afraid that it is battery related as well.

And last but not least ..

StorageWorks RA4100

Yes. A real FC-AL storage unit. I can’t remember the precise specs, but it had 12 SCSI U160 disks. Indestructible. It came with two 1gbit FC-AL HBA’s and I’ve built a real physical Server 2000 failover cluster on it. Hurray.

WISHLIST

The Vintage Hardware Stack is always open for donations. Almost anything storage-like is welcome. But I have a couple of personal favorites that I still want to revive. A NetApp Switched Metrocluster for example. And a working EVA, I sure hope to find good cache batteries someday. Oh, and a 3PAR and a Compellent, just to see where they fit in (or sometimes don’t).